type

Post

status

Published

date

May 23, 2025

slug

research

summary

tags

R

Python

category

Reserach Experience

icon

password

Here is some key information

- Assessed 9 years of longitudinal rankings data on regional peers and top 50 part-time MBA programs to benchmark the Smith School of Business against competing programs

- Developed mixed regression models adjusting for GRE data input to evaluate the impact of enrollment size, student test scores and work experience on rankings

- Uncovered discrepancies between published methodology and empirical results, translating statistical insights into actionable recommendations for academic leadership and student selectivity initiatives

During the spring semester, I worked as a research assistant for a project requested by the Dean‘s office to support data-driven decision-making for program selectivity and ranking. Initially, I had not expected to take on this research role. However, this project presented a unique opportunity to engage in applied research focused on improving program strategy through data analysis.

📝 Why are we doing this?

Need from Dean‘s office

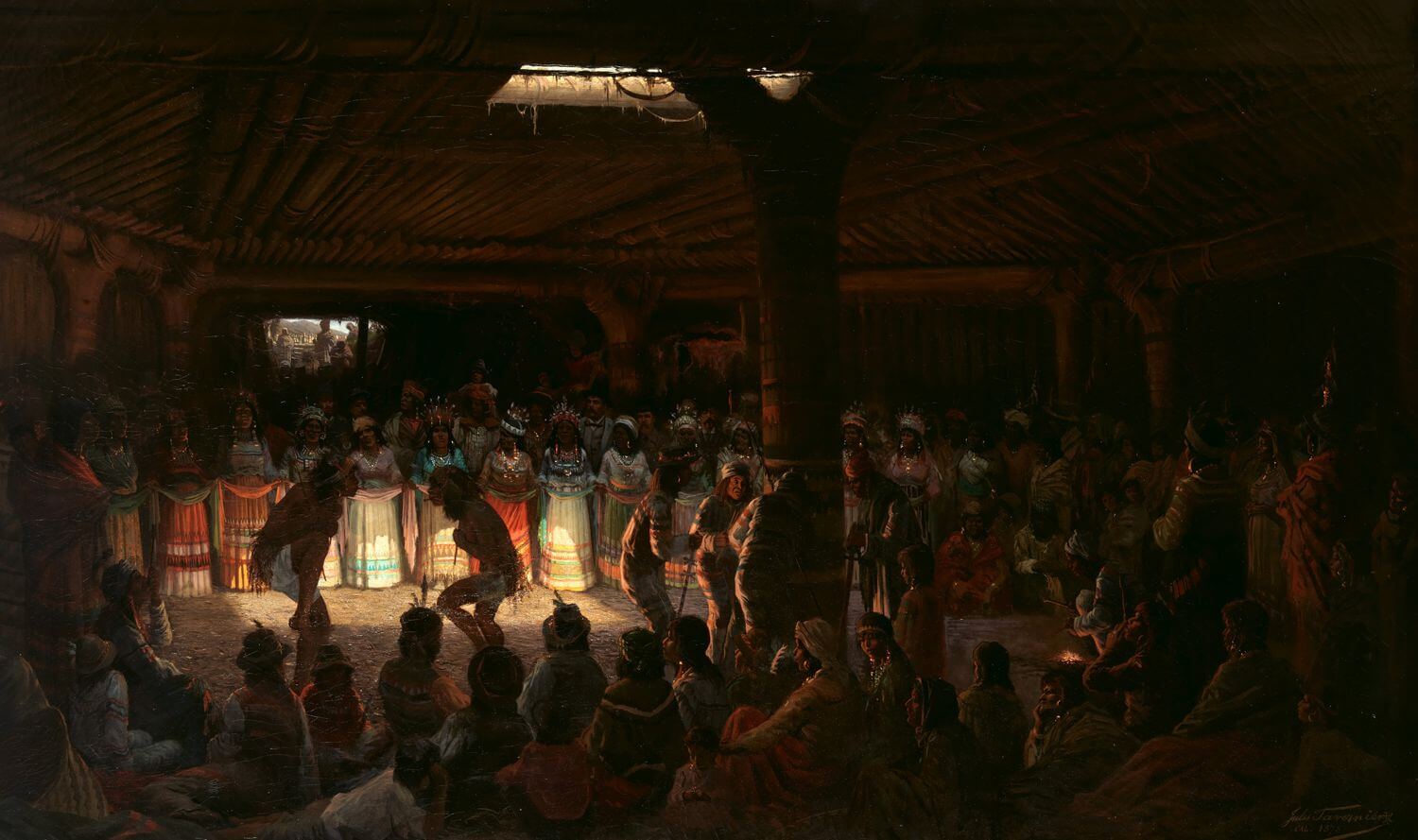

The Dean‘s office aimed to enhance its program selection process and establish a ranking framework based on empirical data. Our research was designed to explore and replicate methodologies used by existing ranking systems, such as U.S. News, Financial Times, and Bloomberg, and to evaluate how our school performs relative to peer institutions. The ultimate goal was to develop a model that could provide actionable insights into program rankings, accounting for factors such as peer comparison, historical performance, and quantitative indicators of program quality.

📑 How we collect and analyze data

Data Collection and Exploration: Working with incomplete data

A significant part of the project involved collecting and exploring data from multiple sources.

Primarily, We gathered information from publicly available websites and internal records, supplemented by direct inquiries to managers for missing data. This process included numerous meetings to clarify data definitions, verify completeness, and refine our research questions.

Because our data were often incomplete or not perfectly aligned with ranking methodologies, we had to make pragmatic adjustments. For instance, while ranking formulas required annual part-time student numbers, we only had cumulative counts over three years. In such cases, we either used the closest variable or the engineered feature variable, ensuring our analyses remained robust despite these limitations.

The project required constant adaptation due to incomplete or imperfect data during the process of exploration. We developed alternative strategies, such as using proxy variables or building simple forecast models, to overcome gaps, such as the tuition variable. This experience strengthened our problem-solving skills and highlighted the importance of flexibility in applied research.

Methodology and Modeling

Once the data were collected, we explored various modeling approaches to replicate ranking methodologies and to understand the impact of different factors on program performance. This included building predictive models to estimate missing variables, such as tuition fees, and integrating them into the broader analysis. Our modeling efforts allowed us to identify key drivers of program rankings and to simulate how changes in certain factors might influence overall outcomes.

Mixture regression is the main model we choose to forecast the rank, and we use the coefficient in the model to give quantitive recommendation. Besides modeling, we also compare through four peer group and look close in the last year for the full picture.

Eventually, we will have this report for internal use within the Smith Business Group and the Dean’s office.

Tell me what's on your mind!

- Author:Yunzhu HUANG

- URL:/article/research

- Copyright:All articles in this blog, except for special statements, adopt BY-NC-SA agreement. Please indicate the source!

Relate Posts